Roger Spitz’s ‘Techistentialism’ Emerges as a Defining Global Zeitgeist for the AI Era

"Building a Human Resilience Infrastructure for the AI Age," Imagining the Digital Future Center, Elon University, April 2026

Elon University, Forbes, and GovTech Spotlight Spitz’s Frameworks as Central to the Evolving Discourse on Human Agency and Artificial Intelligence

SAN FRANCISCO, CA, UNITED STATES, April 6, 2026 /EINPresswire.com/ -- The Disruptive Futures Institute (DFI) today highlighted growing global attention around Techistentialism, the framework developed by futurist Roger Spitz, following its inclusion in the new report from the Elon University Imagining the Digital Future Center and recent international coverage in Forbes, Estadão, MIT Technology Review Brasil, CNN, and GovTech.

The report, “Building a Human Resilience Infrastructure for the AI Age,” spotlights Spitz’s Techistentialism framework and his concept of “Superstupidity” - the risk that humans become dangerously dependent on systems they do not understand - as a growing concern in the public debate over artificial intelligence. It frames the urgent conversation as a tension between Superintelligence vs. Superstupidity.

Increasingly recognized as one of the defining cultural touchstones of 2026, Techistentialism has moved beyond an emerging trend. It now serves as the “spirit of the times,” providing a shared vocabulary for navigating the accelerating “Intelligence Shift.”

TECHISTENTIALISM: A PROPHETIC WARNING GOES VIRAL

The centerpiece of this cultural breakthrough is the identification of the critical tension between ‘Superstupidity’ and ‘Superintelligence’. While the tech industry long obsessed over Artificial Superintelligence (ASI), Spitz successfully shifted the discourse toward an immediate, human-centric risk. In the April 2026 Elon University report, Spitz’s essay - “Will superstupidity be as dangerous as superintelligence?” - became the focal point for major media outlets.

GovTech headlined the findings: “Elon University Research Warns Greatest AI Risk Is ‘Superstupidity’.” The concept captures a widespread anxiety: that as humans become “dangerously reliant on systems they do not understand,” they risk a trajectory that Spitz notes makes the 2006 film Idiocracy seem “prophetic.”

INSTITUTIONAL AUTHORITY AND DISTINGUISHED PEERS

Positioned within one of the most distinguished assemblies of global experts on the future of AI, Roger Spitz’s work appears alongside contributions from internet pioneer Vint Cerf, Python creator Guido van Rossum, and leading technology thinkers such as Sherry Turkle and forecaster Paul Saffo in the Elon University Imagining the Digital Future Center’s report. This rare convergence of foundational architects of the digital age, top academic authorities, and globally recognized futurists underscores the significance of Spitz’s inclusion.

Among these voices, Spitz’s concept of “superstupidity” stands out as one of the report’s resonant and publicly cited ideas - featured among the “striking predictions” highlighted in the Center’s official release. His Techistentialism framework complements and extends the work of peers focused on technical systems and social impact, positioning Spitz as a leading voice translating complex AI risks into a broader cultural, philosophical, and strategic discourse.

THE BOARDROOM SHIFT: FROM C-SUITE TO A-SUITE

This milestone marks ten years since Spitz first articulated the Techistential philosophy in 2016. Formerly the Global Head of Technology M&A at one of the world’s largest investment banks, Spitz identified a structural disconnect between human judgment and automated systems.

“As we enter 2026, the preservation of human agency ceases to be a default condition; it demands deliberate assertion,” says Roger Spitz, Chair of the Disruptive Futures Institute. “We are witnessing the rise of the ‘A-Suite’ (Algorithmic Executives), where prescriptive AI systems autonomously execute decisions. Our responsibility is to understand how these tools - no longer discernible from the architects - are redefining humanity itself.”

The Disruptive Futures Institute labels this ‘sleepwalking migration’ the shift from the C-Suite to the A-Suite - a transformation where prescriptive AI systems begin to autonomously execute higher-order algorithmic decisions, threatening to leave human leaders as ‘idle machines.’

TECHISTENTIAL CENTER: ASSERTING HUMAN INTELLIGENCE

As part of its continued investment in advancing human-centric AI, the Disruptive Futures Institute has established the Techistential Center for Human & Artificial Intelligence. This dedicated research and education initiative focuses on the impacts, governance, and ethics of AI. The Center explores the future of strategic decision-making in a world where human and machine intelligence are increasingly intertwined, equipping leaders with the frameworks and tools needed to navigate the accelerating “Intelligence Shift.”

The transition of Techistentialism into the public consciousness marks the evolution of the mission from diagnosis to active defense. As 2026 takes shape - a year statistically closer to 2050 than to 2000 - the Disruptive Futures Institute is launching a series of global initiatives to build a human resilience infrastructure in our complex, unpredictable world:

• The AAA Framework: A proprietary system for becoming Anticipatory (foresight), Antifragile (growing stronger from shocks), and Agile (bridging today’s choices with tomorrow’s possibilities).

• The 6 i’s Cognitive Toolkit: Empowering human leaders to retain value over prescriptive systems through Intuition, Inspiration, Imagination, Improvisation, Invention, and the Impossible.

• Existential Literacy: A new educational priority focused on cultivating an understanding of how technologies shape worldviews, goals, values, and identities.

“While algorithms can calculate the probable, only humans can invent the impossible,” Spitz concludes. As Techistentialism emerges in public discourse, the Techistential Center continues its mission to reclaim human agency as the architect of the futures.

To learn more about the Disruptive Futures Institute:

• Email: info@disruptivefutures.org

• Explore: https://www.disruptivefutures.org/

• The Disruptive Futures Institute’s AI Center - Techistential Center for Human & Artificial Intelligence: https://www.techistential.ai/artificial-intelligence

• Media inquiries: media@disruptivefutures.org

For the Elon University Imagining the Digital Future Center report (April 2026):

• “Building a Human Resilience Infrastructure for the AI Age”: https://imaginingthedigitalfuture.org/reports-and-publications/human-resilience-in-the-age-of-ai/

###

APPENDIX: MEDIA BRIEFING NOTES TO EDITORS

I. DETAILED CONTEXT AND KEY DEFINITIONS

1. Techistentialism: A play on “technology” and “existentialism,” this philosophy - coined by Roger Spitz in 2016 - studies existence and decision-making in an AI world where technology and human life are inseparable. It argues that technological “existential risk” includes the loss of human autonomy and agency. It draws on philosophical foundations from Martin Heidegger (technology as a way of “revealing”) and Jean-Paul Sartre (“existence precedes essence”).

• Techistentialism broadens the definition of technology’s “existential risks” to include the curtailment of human agency. This brings the discussion back to a core meaning of “existential,” beyond physical threats, to consider the deep importance of meaningful human autonomy.

• Instead of treating AI primarily as a speculative future danger, Techistentialism also focuses on immediate, human-centric vulnerabilities, advocating for the cultivation of human capabilities - Antifragile, Anticipatory, and Agility - as essential for relevance in the 21st century.

2. Techistential’s ‘Superstupidity’ vs Superintelligence Warning: Central to the 2026 media storm is the warning that the greatest existential risk of the AI era is not merely machines becoming “too smart,” but humans becoming “superstupid.” Developed by Roger Spitz, this Techistential framework describes a state where human cognitive, decision-making, and ethical capacities fail to keep pace with the complex systems we have built, leading to a dangerous over-reliance on technologies we no longer fully understand.

The Techistential condition manifests through several critical factors:

• Cognitive De-skilling: An over-reliance on prescriptive algorithms (systems that recommend specific actions) leads to a fundamental erosion of the human decision-making value chain. As we outsource judgment, our independent reasoning and the “metaskill of learning” erode.

• Outsourcing Judgment: The shift of moral and ethical reasoning to systems that prioritize efficiency over effectiveness. When we outsource thinking and take shortcuts to outcomes, we also outsource the interpretive presence, the discovery process, and moral muscle to ask what something means or whether it should be done.

• Algorithmic Determinism: The loss of the ability to question, audit, or contest automated decisions. This creates an “Auditability Gap” where responsibility is diffused until errors in critical infrastructure - from healthcare to automated defense - become irreversible.

• Loss of Human Agency: AI invisibly curates our choices, decisions, and worldviews, turning free acting agents into passive spectators. Techistentialism argues that when machines author our essence, contingency vanishes and existential freedom dies.

• Systemic Fragility: Tightly coupled, nonlinear AI systems are prone to cascading failures. Algorithmic misalignment can trigger high-impact catastrophes at machine speed, often before a human agent can intervene.

• Data-Fueled “Stupidity”: AI acts incompetently when fueled by flawed or biased data. Unlike human learning, more data does not guarantee wisdom - only scaled errors. “Stupid” machines operating in complex, unpredictable, nonlinear environments represent a concrete existential threat.

• Behavioral Degradation: Humans risk becoming “idle machines” - losing curiosity, critical inquiry, and the ability to navigate life’s inherent paradoxes, tensions, and contradictions. Spitz warns that without becoming AAA (Anticipatory, Antifragility, Agile), the satirical future of the film Idiocracy becomes a prophetic trajectory.

Spitz’s core warning is that Superstupidity can counter any level of intelligence. The danger is a society that is technically advanced but cognitively hollow - a future where we possess the most powerful tools in history but have lost the wisdom, agency, and accountability to steer them. To counter this, the Disruptive Futures Institute advocates for existential literacy and the AAA Framework as the only viable infrastructure for human resilience.

3. The AAA Framework & Existential Literacy

To become “future-ready,” Spitz advocates for:

• Antifragility: Building systems that benefit from shocks, rather than just resisting them (based on Nassim Taleb’s concepts but applied to organizational strategy and systemic change).

• Anticipatory: Developing the capacity to spot early signals of change and integrate next-order impacts.

• Agility: Cognitive and strategic flexibility that allows leaders to bridge long-term vision with real-time pivots.

MEDIA REFERENCING SPITZ’S PAPER

Full Report: Anderson, J. & Rainie, L. (2026). Building a Human Resilience Infrastructure for the AI Age. Elon University. Full Report PDF

https://imaginingthedigitalfuture.org/wp-content/uploads/2026/03/ITDF-Human-Resilience-full-report-3.20.26.pdf

Techistentialism Paper: Spitz, R. (2026, April). Will superstupidity be as dangerous as superintelligence? In L. Rainie & J. Anderson (Eds.), Building a human resilience infrastructure for the AI age. Elon University Imagining the Digital Future Center:

• Elon University Research Essay: Spitz, Roger. (2026, April). Will superstupidity be as dangerous as superintelligence? Imagining the Digital Future Center.

https://imaginingthedigitalfuture.org/will-superstupidity-be-as-dangerous-as-superintelligence/

GovTech Analysis (April 2026):

• GovTech Coverage: Gagnon, Dallas. (2026, April 2). Elon University Research Warns Greatest AI Risk Is 'Superstupidity'. GovTech. https://www.govtech.com/education/higher-ed/elon-university-research-warns-greatest-ai-risk-is-superstupidity

“The existential danger to people may not come from AI becoming too intelligent, but from humans becoming dangerously reliant on systems they do not understand,” wrote Roger Spitz, founder of the Disruptive Futures Institute in San Francisco.

Forbes Article: Cohen, Nirit. (2026, April 5). If AI Is Making Decisions At Work, What’s Our Job? Forbes.

• Nirit Cohen (Forbes Contributor), in her April 5, 2026 feature, “If AI Is Making Decisions At Work, What’s Our Job?”, cites Spitz’s “Superstupidity” to explain the erosion of organizational judgment. https://www.forbes.com/sites/niritcohen/2026/04/05/if-ai-is-making-decisions-at-work-whats-our-job/

“Work continues, decisions get made, but the conditions of judgment shift without being noticed. People become more comfortable validating and executing than questioning and interpreting. Roger Spitz calls this “superstupidity,” in contrast to superintelligence, where humans become more reliant on AI than their understanding warrants.” - Nirit Cohen, Forbes, 5 April 2026

ROGER SPITZ QUOTES FROM ELON UNIVERSITY REPORT

“The existential danger to people may not come from AI becoming too intelligent, but from humans becoming dangerously reliant on systems they do not understand.”

“The question is not how much machines will augment human decision-making, but whether humans will remain involved in the process at all. If humans fail to sufficiently develop our capabilities, rapidly learning machines could surpass us. To shift the relationship between humans and machines, AI does not have to reach AGI. It just needs to become better than us at handling complex systems.”

“Maybe the existential risk is not machines taking over the world or reaching human-level intelligence, but rather the opposite, where human beings start thinking and responding like idle machines – unable to connect the emerging dots of our complex, systemic world. … Superstupidity can counter any level of intelligence.”

“To assure that ‘Idiocracy’ is not a harbinger of the future, updating our education system has now become an existential priority. Education’s effectiveness in problem-solving should be evaluated on whether it can help humanity become relevant and future-ready for our complex 21st century. We should inspire passion, nurture curiosity, emphasize uncertainty, develop range and foster critical thinking, using Socratic questioning to examine assumptions.”

ORIGINAL TECHISTENTIALISM REFERENCES IN ROGER SPITZ CITATIONS

• Spitz, R. “The Future of Strategic Decision-Making”. Journal of Futures Studies, July 26, 2020, available at https://jfsdigital.org/2020/07/26/the-future-of-strategic-decision-making/

• Spitz, R., and Nykänen, R. “An Existential Framework for the Future of Decision-Making in Leadership”. In T. Mengel (Ed.), Leadership for the Future: Lessons from the Past, Current Approaches, and Future Insights. Cambridge Scholars Publishing: Newcastle upon Tyne, 2021.

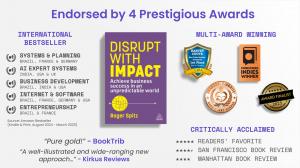

• Spitz, R. Disrupt With Impact: Achieve Business Success in an Unpredictable World. Kogan Page: London, 2024.

• Spitz, R. “The ‘Decade of Techistentialism’ is here now.”. Disruptive Futures Institute, December 30, 2025. See https://thrivingondisruption.substack.com/p/the-decade-of-techistentialism-is

• Spitz, R., and Romani, B. “‘Risco existencial da IA está em como ela afeta nossa liberdade de escolha’, diz futurista”. Estadão, October 17, 2025. Official link: https://www.estadao.com.br/link/inovacao/risco-existencial-da-ia-esta-em-como-ela-afeta-nossa-liberdade-de-escolha-diz-futurista/ . Disruptive Futures Institute Substack: https://thrivingondisruption.substack.com/p/the-existential-risk-of-ai-lies-in

• Spitz, R., and Lihotzky, E. S. “#11 - Techistentialism: Trust, Agency, and Decision-Making in Tech Acceleration”. The in-between trust podcast, November 5, 2025. See https://open.spotify.com/episode/2jpYlw1KkGGSktlbL4Ts4E

ABOUT ROGER SPITZ

Roger Spitz is a leading authority on artificial intelligence, strategic foresight, and the future of decision-making. He coined the term Techistentialism and founded the Techistential Center for Human & Artificial Intelligence, advancing research and education on AI’s impacts, governance, and ethics.

Spitz serves on the AI Council of the Indian Society for Artificial Intelligence, the World Economic Forum’s AI Global Alliance, and the Global Centre for AI Excellence in San Francisco. He is a venture partner at Berkeley SkyDeck and Vektor Partners, advising and investing in AI and deep tech startups, and contributes an AI column to MIT Technology Review Brazil.

Ranked among the top global experts in AI and AI ethics (Thinkers360), Spitz is the bestselling author of five books, including Disrupt With Impact. A globally sought-after keynote speaker and advisor, he works with CEOs, investors, and policymakers to navigate the “Intelligence Shift” - the transformation of decision-making in an AI-driven world.

THE 6 PILLARS OF THE DISRUPTIVE FUTURES INSTITUTE

The Disruptive Futures Institute (San Francisco) ecosystem is designed as an Operating System for Unpredictability:

• Futures Intelligence & Anticipatory Capabilities: The skills for futures fluency and resiliency. Focused on developing the cognitive and operational skills required to scale foresight across an organization.

• Strategic Foresight Advisory - Techistential: The frameworks for adaptive strategies. High-level advisory for leadership teams, investors, and boards using the Disruptive Futures Institute’s proprietary frameworks to build resilience.

• Techistential Center for Human & Artificial Intelligence: The future of agency and decision-making. Researching the impacts of AI on governance, ethics, and human decision-making agency.

• DFI Metaruptions Center for Emerging Fields: The unlocking of value creation beyond convergence. Strategic intelligence focused on the dissolution of industry boundaries and the birth of emerging fields, business models, and breakthroughs.

• DFI Geopolitics Center for Grand Strategy: The navigation of global disorder in a fracturing world of the “Three Gs” - Geopolitics, Geoeconomics, Geotechnology.

• DFI Nature & Climate Academy: The transition to sustainable futures. The flagship education center for climate foresight, decarbonization, and the energy transition.

FOLLOW THE DISRUPTIVE FUTURES INSTITUTE

► Disruptive Futures Institute Substack - Thriving on Disruption: Metaruptions Briefings: https://thrivingondisruption.substack.com

► Instagram: https://www.instagram.com/disrupt_futures / @disrupt_futures

► X: https://twitter.com/disrupt_futures / @disrupt_futures

► LinkedIn: https://www.linkedin.com/company/disruptivefuturesinstitute

Media Contact

Disruptive Futures Institute

email us here

Visit us on social media:

LinkedIn

Instagram

YouTube

X

Other

ROGER SPITZ OFFICIAL SPEAKER REEL 2026 | Global Futurist & Keynote Speaker

Legal Disclaimer:

EIN Presswire provides this news content "as is" without warranty of any kind. We do not accept any responsibility or liability for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this article. If you have any complaints or copyright issues related to this article, kindly contact the author above.